For ML practitioners, the pure expectation is {that a} new ML mannequin that reveals promising outcomes offline may also achieve manufacturing. However typically, that’s not the case. ML fashions that outperform on check information can underperform for actual manufacturing customers. This discrepancy between offline and on-line metrics is commonly a giant problem in utilized machine studying.

On this article, we are going to discover what each on-line and offline metrics actually measure, why they differ, and the way ML groups can construct fashions that may carry out effectively each on-line and offline.

The Consolation of Offline Metrics

Offline Mannequin analysis is the primary checkpoint for any mannequin in deployment. Coaching information is normally break up into practice units and validation/check units, and analysis outcomes are calculated on the latter. The metrics used for analysis might range based mostly on mannequin sort: A classification mannequin normally makes use of precision, recall, AUC, and so forth, A recommender system makes use of NDCG, MAP, whereas a forecasting mannequin makes use of RMSE, MAE, MAPE, and so forth.

Offline analysis makes speedy iteration attainable as you may run a number of mannequin evaluations per day, examine their outcomes, and get fast suggestions. However they’ve limits. Analysis outcomes closely rely on the dataset you select. If the dataset doesn’t symbolize manufacturing site visitors, you will get a false sense of confidence. Offline analysis additionally ignores on-line components like latency, backend limitations, and dynamic consumer habits.

The Actuality Test of On-line Metrics

On-line metrics, against this, are measured in a reside manufacturing setting by way of A/B testing or reside monitoring. These metrics are those that matter to the enterprise. For recommender programs, it may be funnel charges like Click on-through charge (CTR) and Conversion Price (CVR), or retention. For a forecasting mannequin, it may possibly deliver value financial savings, a discount in out-of-stock occasions, and so forth.

The plain problem with on-line experiments is that they’re costly. Every A/B check consumes experiment site visitors that might have gone to a different experiment. Outcomes take days, typically even weeks, to stabilize. On prime of that, on-line alerts can typically be noisy, i.e., impacted by seasonality, holidays, which may imply extra information science bandwidth to isolate the mannequin’s true impact.

| Metric Sort | Professionals & Cons |

| Offline Metrics, eg: AUC, Accuracy, RMSE, MAPE |

Professionals: Quick, Repeatable, and low cost Cons: Doesn’t replicate the actual world |

| On-line Metrics, eg: CTR, Retention, Income |

Professionals: True Enterprise affect reflecting the actual world Cons: Costly, gradual, and noisy (impacted by exterior components) |

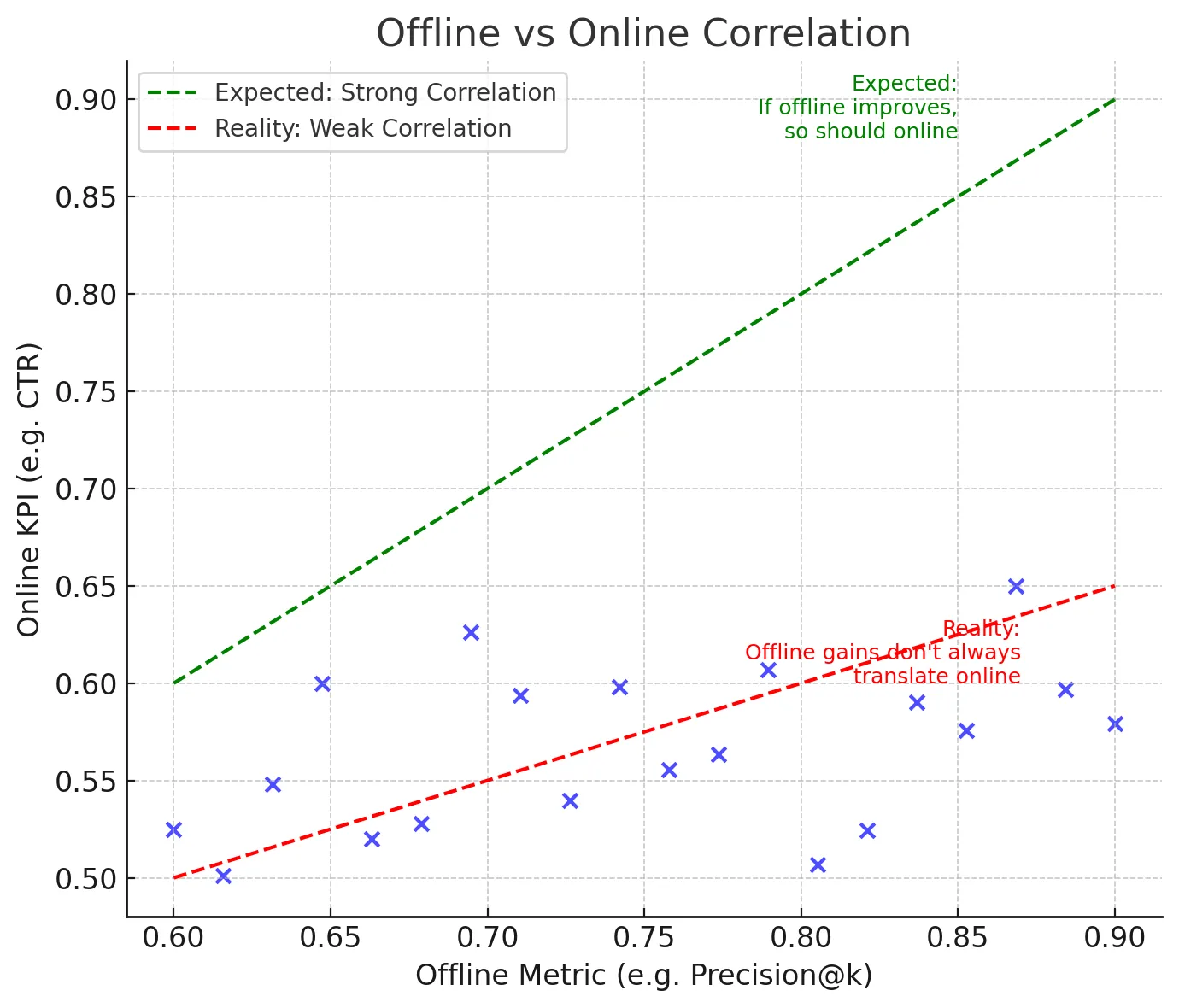

The On-line-Offline Disconnect

So why do fashions that shine offline stumble on-line? Firstly, consumer habits could be very dynamic, and fashions educated up to now might not be capable of sustain with the present consumer calls for. A easy instance for it is a recommender system educated in Winter might not be capable of present the precise suggestions come summer season since consumer preferences have modified. Secondly, suggestions loops play a pivotal half within the online-offline discrepancy. Experimenting with a mannequin in manufacturing modifications what customers see, which in flip modifications their habits, which impacts the information that you just gather. This recursive loop doesn’t exist in offline testing.

Offline metrics are thought of proxies for on-line metrics. However typically they don’t line up with real-world objectives. For Instance, Root Imply Squared Error ( RMSE ) minimises total error however can nonetheless fail to seize excessive peaks and troughs that matter lots in provide chain planning. Secondly, app latency and different components can even affect consumer expertise, which in flip would have an effect on enterprise metrics.

Bridging the Hole

The excellent news is that there are methods to scale back the online-offline discrepancy downside.

- Select higher proxies: Select a number of proxy metrics that may approximate enterprise outcomes as a substitute of overindexing on one metric. For instance, a recommender system would possibly mix precision@okay with different components like variety. A forecasting mannequin would possibly consider stockout discount and different enterprise metrics on prime of RMSE.

- Research correlations: Utilizing previous experiments, we are able to analyze which offline metrics correlated with on-line profitable outcomes. Some offline metrics will likely be persistently higher than others in predicting on-line success. Documenting these findings and utilizing these metrics will assist the entire crew know which offline metrics they’ll depend on.

- Simulate interactions: There are some strategies in advice programs, like bandit simulators, that replay consumer historic logs and estimate what would have occurred if a unique rating had been proven. Counterfactual analysis can even assist approximate on-line habits utilizing offline information. Strategies like these will help slim the online-offline hole.

- Monitor after deployment: Regardless of profitable A/B assessments, fashions drift as consumer habits evolves ( just like the winter and summer season instance above ). So it’s at all times most popular to watch each enter information and output KPIs to make sure that the discrepancy doesn’t silently reopen.

Sensible Instance

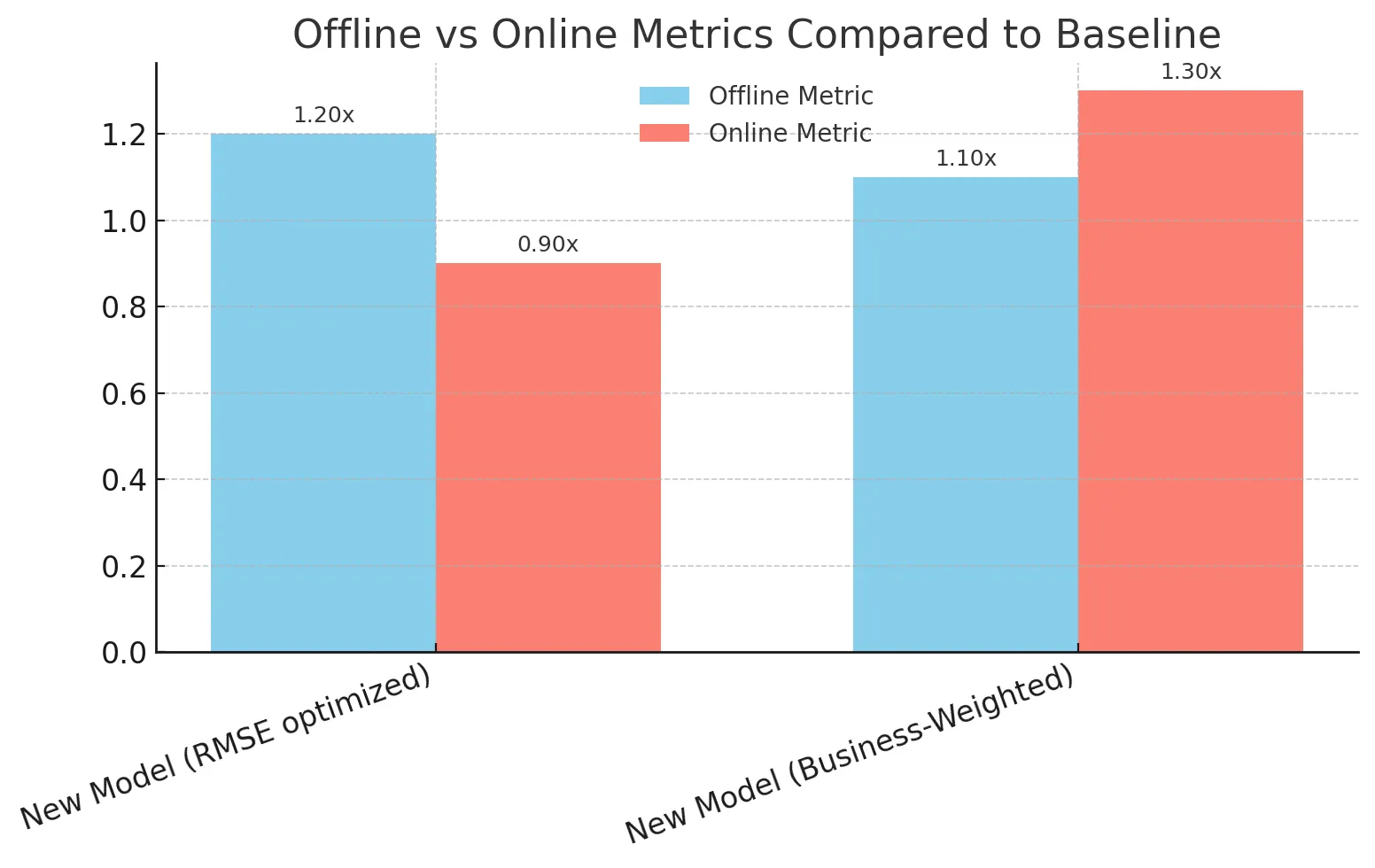

Take into account a retailer deploying a brand new demand forecasting mannequin. The mannequin confirmed nice promising outcomes offline (in RMSE and MAPE), which made the crew very excited. However when examined on-line, the enterprise noticed minimal enhancements and in some metrics, issues even appeared worse than baseline.

The issue was proxy misalignment. In provide chain planning, underpredicting demand for a trending product causes misplaced gross sales, whereas overpredicting demand for a slow-moving product results in wasted stock. The offline metric RMSE handled each as equals, however real-world prices have been removed from being symmetric.

The crew decided to redefine their analysis framework. As an alternative of solely counting on RMSE, they outlined a customized business-weighted metric that penalized underprediction extra closely for trending merchandise and explicitly tracked stockouts. With this transformation, the subsequent mannequin iteration supplied each robust offline outcomes and on-line income positive factors.

Closing ideas

Offline metrics are just like the rehearsals to a dance observe: You’ll be able to be taught shortly, check concepts, and fail in a small, managed surroundings. On-line metrics are like thes precise dance efficiency: They measure precise viewers reactions and whether or not your modifications ship true enterprise worth. Neither alone is sufficient.

The actual problem lies find the most effective offline analysis frameworks and metrics that may predict on-line success. When performed effectively, groups can experiment and innovate quicker, reduce wasted A/B assessments, and construct higher ML programs that carry out effectively each offline and on-line.

Continuously Requested Questions

A. As a result of offline metrics don’t seize dynamic consumer habits, suggestions loops, latency, and real-world prices that on-line metrics measure.

A. They’re quick, low cost, and repeatable, making fast iteration attainable throughout improvement.

A. They replicate true enterprise affect like CTR, retention, or income in reside settings.

A. By selecting higher proxy metrics, finding out correlations, simulating interactions, and monitoring fashions after deployment.

A. Sure, groups can design business-weighted metrics that penalize errors in another way to replicate real-world prices.

Login to proceed studying and luxuriate in expert-curated content material.